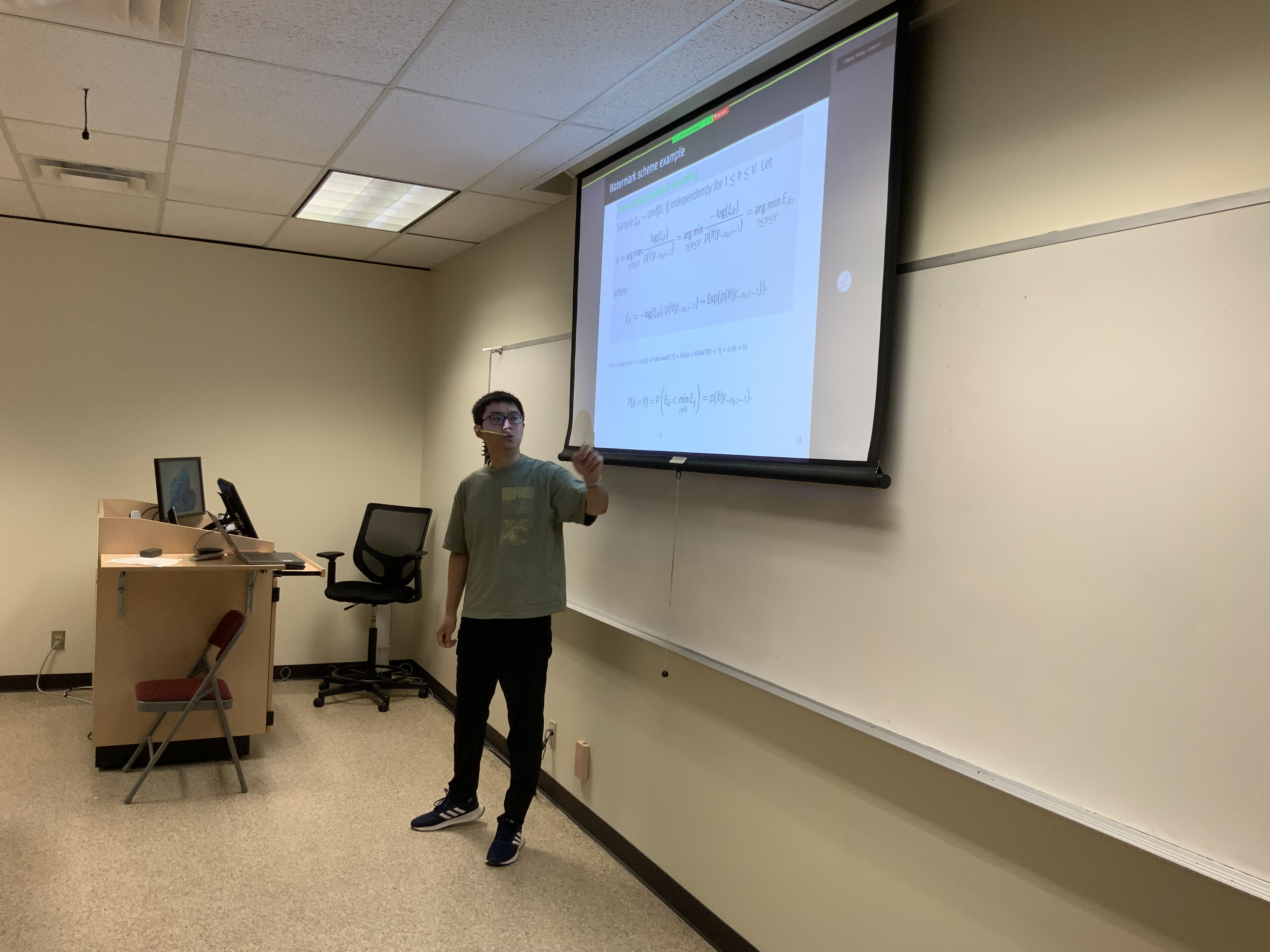

Stat Cafe - Anthony Li

Segmenting Watermarked Texts From Language Models

- Time: Wednesday 9/18/2024 from 11:30 AM to 12:30 PM

- Location: BLOC 411

- Pizza and drinks provided

Description

Watermarking in LLM generated text is a technique that involves embedding (nearly) unnoticeable statistical signals within generated content to help trace its source. We focus on a scenario where an untrusted third-party user sends prompts to a trusted language model (LLM) provider, who then generates a text from their LLM with a watermark. This setup makes it possible for a detector to later identify the source of the text if the user publishes it. The user can modify the generated text by substitutions, insertions, or deletions. Our objective is to develop a statistical method to detect if a published text is LLM-generated, mainly from the perspective of a detector. We further propose a methodology to segment the published text into watermarked and nonwatermarked sub-strings. The proposed approach is built upon randomization tests and change point detection techniques. We demonstrate that our method ensures Type I and Type II error control and can accurately identify watermarked sub-strings by finding the corresponding change point locations. To validate our technique, we apply it to texts generated by several language models with prompts extracted from Google’s C4 dataset and obtain encouraging numerical results.

Gallery